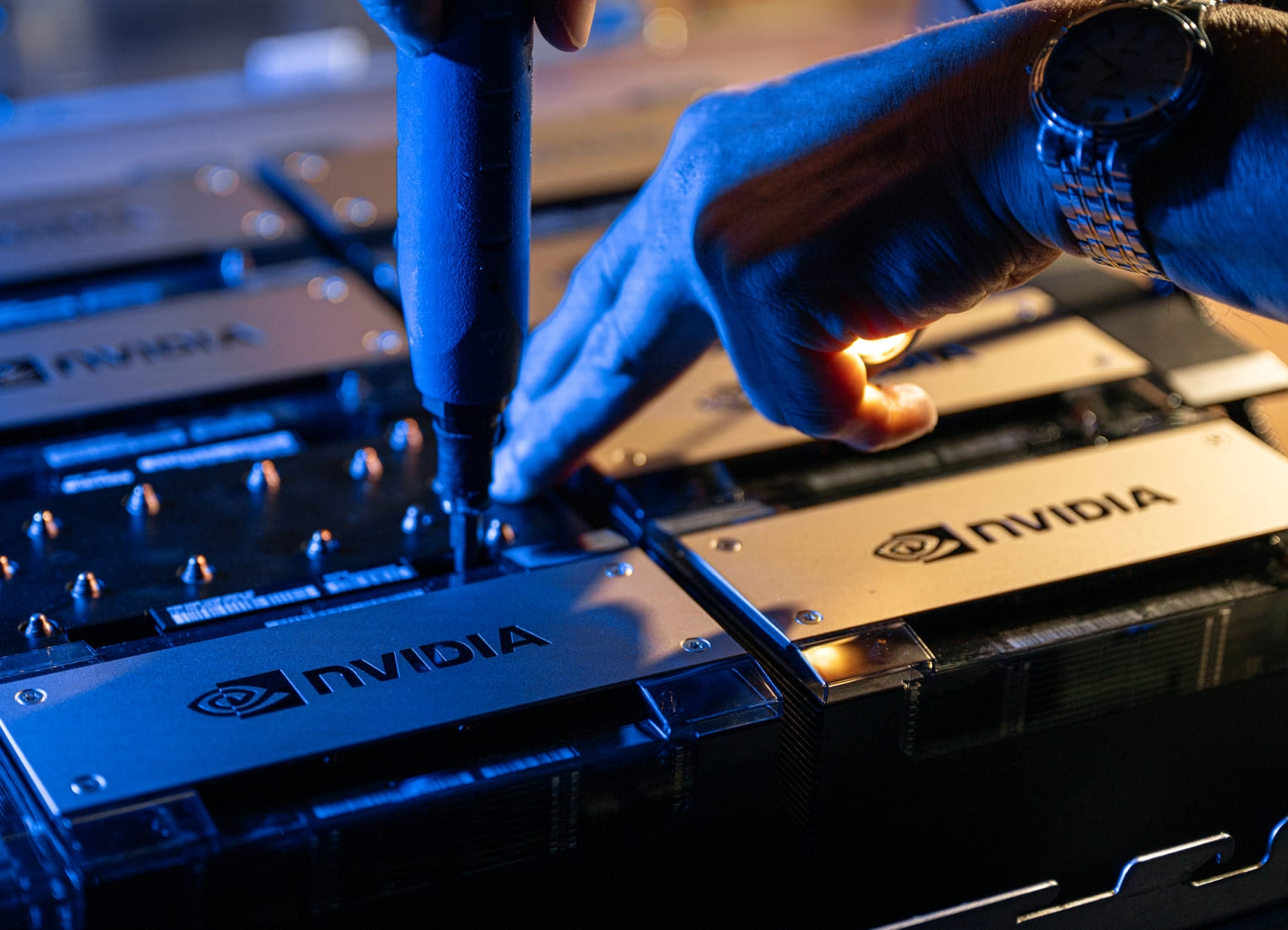

CoreWeave is proud to be the among the first providers to offer cloud instances with NVIDIA HGX H100 supercomputers.

NVIDIA’s HGX H100 platform represents a major leap forward for the AI community, enabling up to seven times better efficiency in high-performance computing (HPC) applications, up to nine times faster AI training on the largest models and up to 30 times faster AI inference than the NVIDIA HGX A100.

Incredible speed, combined with the lowest NVIDIA GPUDirect network latency in the market with the NVIDIA Quantum-2 InfiniBand platform, reduces the training time of AI models to “days or hours instead of months.”

“CoreWeave’s new offering of instances featuring NVIDIA HGX H100 supercomputers will enable customers the flexibility and performance needed to power large-scale HPC applications.”

– Dave Salvator, Director of Product Marketing, NVIDIA

Enterprise Infrastructure for Scale-Up Stage Companies

Get H100s, A100s, A5000s, VMs, flexible storage, high performance networking and more, all delivered seamlessly through CoreWeave’s Kubernetes-native infrastructure. CoreWeave is purpose-built for large-scale GPU-accelerated workloads, specialized to serve the most demanding AI and machine learning applications.

“This validates what we’re building and where we’re heading. CoreWeave’s success will continue to be driven by our commitment to making GPU-accelerated compute available to startup and enterprise clients alike. Investing in the NVIDIA HGX H100 platform allows us to expand that commitment, and our pricing model makes us the ideal partner for any companies looking to run large-scale, GPU-accelerated AI workloads.”

– Michael Intrator, CEO and co-founder, CoreWeave

CoreWeave leverages a range of open-source Kubernetes projects, integrates with best-in-class technologies like Determined.AI and provides support for open-source AI models including Stable Diffusion, GPT-NeoX-20B and BLOOM.

CoreWeave's pricing model saves clients up to 80% over large, generalized public clouds. First, clients only pay for the highly configurable HPC resources they use (and never pay for idle time).

Second, CoreWeave’s Kubernetes-native infrastructure and networking architecture produce performance advantages, including industry-leading spin-up times and responsive auto-scaling capabilities that allow clients to use compute more efficiently.

Ready to Check Out the HGX H100?

Learn more about the NVIDIA HGX H100 today, or reserve now.