Agentic AI refers to AI systems that can plan, take action, and complete tasks autonomously using a combination of reasoning, memory, and external tools.

Unlike traditional AI systems that respond to a single prompt, agentic AI operates as an active system. It can break down goals into steps, decide which actions to take, and adjust its approach based on results.

In practice, agentic AI is often powered by large language models (LLMs) combined with tool use, memory, and orchestration frameworks. These systems are increasingly used to automate workflows, manage complex processes, and coordinate across software systems with minimal human input.

How does agentic AI work?

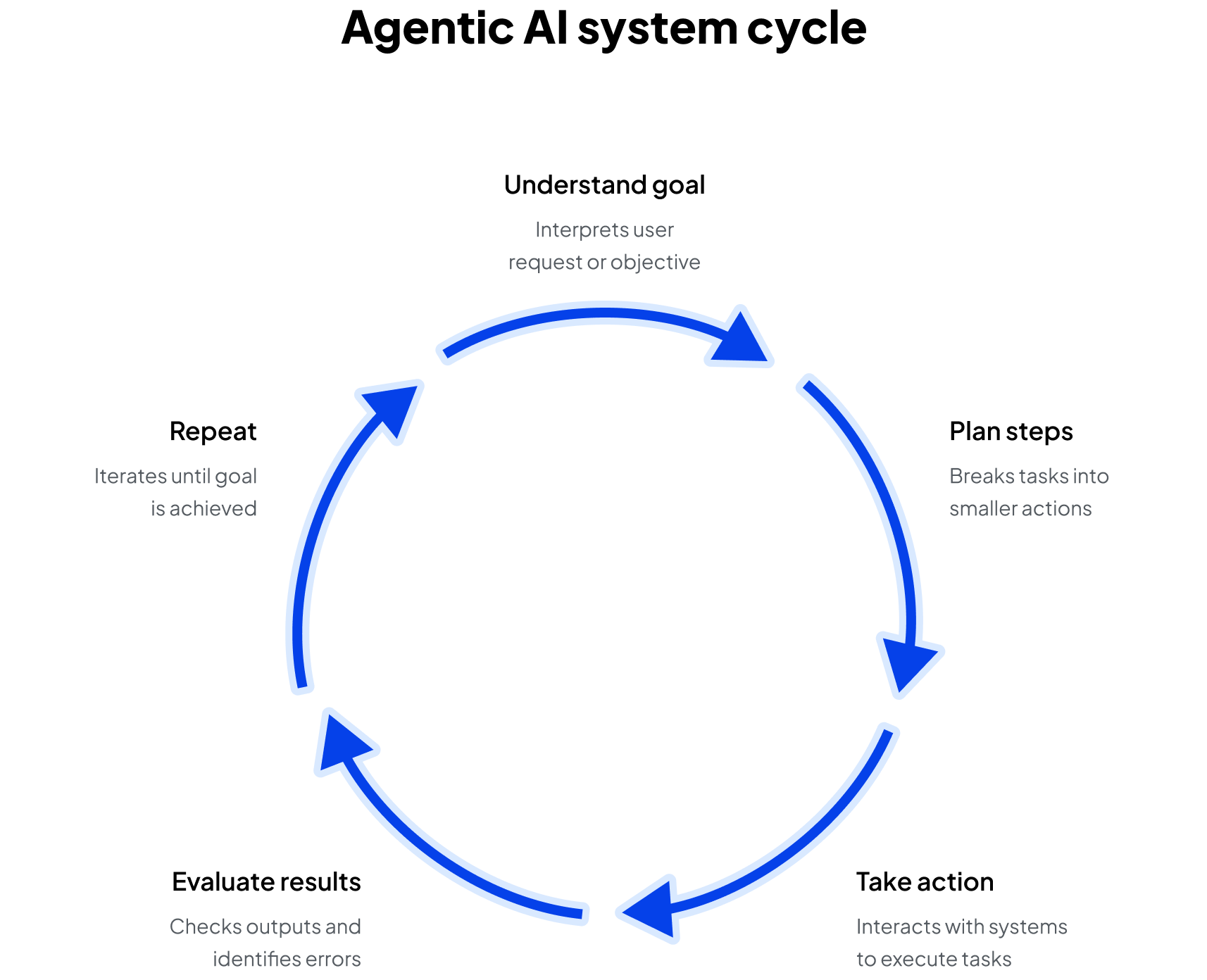

At a high level, an agentic AI system operates as a closed-loop control system that continuously interprets goals, takes action, and updates its strategy based on results.

Most modern implementations follow a structured execution loop:

- Goal interpretation

The system parses a user request or system-triggered objective into a structured representation. This often involves prompt decomposition, where a large language model (LLM) translates natural language into actionable steps. - Planning and task decomposition

The agent breaks the objective into smaller sub-tasks. This may include sequential planning (step-by-step workflows), dynamic planning (adjusting steps mid-execution), or tree-based exploration (evaluating multiple possible paths). - Tool selection and execution

The agent determines which tools to use for each step. Tools can include APIs (e.g., CRM systems and financial data feeds), code execution environments, databases or vector stores, and external services (e.g., search and retrieval). - State tracking and memory updates

The system maintains context across steps using short-term memory (conversation or task state) or long-term memory (stored embeddings, logs, or knowledge bases). - Evaluation and feedback

After each action, the agent evaluates results using internal reasoning (LLM self-reflection), external signals (success/failure responses, metrics), and rule-based validation layers. - Iteration and recovery

If results are incomplete or incorrect, the agent adjusts its plan and retries. This enables error recovery, adaptive workflows, and long-running task execution.

This loop allows agentic systems to handle multi-step workflows, recover from failures, and adapt in real time. With these capabilities, agentic AI moves beyond traditional prompt-response AI and handles multi-step, stateful processes in production environments.

Core components of agentic AI

Agentic AI systems are composed of multiple interacting layers that together enable autonomous reasoning, action, and control.

Reasoning and planning layer

This layer determines what the system should do next. It is typically powered by an LLM that evaluates the current state, interprets goals, and sequences actions. Techniques such as chain-of-thought reasoning, ReAct (reasoning + acting), and structured planning prompts help the system break down complex tasks and decide among multiple possible approaches.

Tooling and execution layer

This layer connects the agent to external systems and enables it to take real action. Tools are exposed as structured functions with defined inputs and outputs, allowing the model to interact with APIs, databases, code execution environments, and enterprise software.

Many modern implementations rely on function calling or tool invocation frameworks, where the LLM generates structured commands that trigger real operations. In production environments, this layer is tightly controlled to enforce permissions, validate inputs, and prevent unsafe actions.

Memory systems

Memory allows the agent to maintain context and improve decision-making over time.

Short-term memory stores the current task state, including intermediate steps and recent outputs, ensuring continuity within a workflow. Long-term memory persists information across sessions, often using vector databases or retrieval systems to enable context-aware reasoning and retrieval-augmented generation (RAG).

State and orchestration layer

This layer manages how tasks are executed over time; it tracks state transitions, enforces execution order, and handles retries, branching logic, and failure recovery.

Orchestration frameworks such as LangGraph or AutoGen are often used to explicitly define these workflows, enabling developers to control how agents move through complex, multi-step processes.

Environment and input layer

The environment defines what the agent can observe and respond to. Inputs may include user requests, system-generated events, or external data streams.

This layer provides the raw signals that drive decision-making, allowing the agent to operate in dynamic environments where conditions can change in real time.

Safety and governance layer

This layer ensures that agent behavior remains aligned with user intent and organizational policies. Guardrails may include permissioning systems, output validation, rate limiting, and human-in-the-loop checkpoints.

As agents gain the ability to take real-world actions, this layer becomes critical for maintaining trust, security, and compliance.

What are AI agents?

AI agents are the execution units of agentic AI systems. Each agent is designed to operate autonomously within a defined scope, using a combination of reasoning, memory, and tool access.

A typical agent architecture includes:

- Decision engine: often an LLM that determines the next action based on the current state and goals

- Policy or control logic: defines constraints, priorities, and rules for how decisions are made; this can include prompt templates, system instructions, or external policies

- Memory module: stores intermediate results, historical context, and retrieved knowledge to inform decisions

- Tool interface: enables interaction with external systems through APIs, function calls, or execution environments

- Execution loop: a controller that repeatedly observes state, decides on an action, executes, and updates state

Agents can vary widely in complexity. Some are lightweight wrappers around an LLM with tool access, while others are highly structured systems with explicit planning, validation, and coordination mechanisms.

In production environments, agents are often designed to be bounded and observable, meaning their actions are logged, auditable, and constrained within defined limits.

Single-agent vs multi-agent systems

Agentic AI systems can be designed with a single agent handling an entire workflow or multiple agents working together. The right approach depends on the task's complexity, the need for specialization, and the level of coordination required across systems.

Single-agent systems

Single-agent systems rely on one agent to manage the full lifecycle of a task, including planning, execution, and validation. The agent uses its reasoning capabilities to break down a goal, select tools, and iterate until completion, all within a single control loop.

This approach is simpler to design and deploy, making it well-suited for straightforward workflows such as personal assistants, lightweight automation, or prototyping. However, as tasks become more complex, a single agent can become a bottleneck, especially when it needs to handle multiple responsibilities or operate across different domains.

Multi-agent systems

Multi-agent systems distribute work across multiple specialized agents, each responsible for a specific function. For example, one agent may focus on planning, another on executing tasks, and a third on validating outputs or enforcing constraints.

These agents coordinate through shared memory, message passing, or an orchestration layer that manages dependencies and communication. This architecture enables parallelism, specialization, and greater flexibility, making it better suited for complex, large-scale workflows. The tradeoff is increased system complexity, particularly in coordination, debugging, and observability.

Real-world applications and use cases

Agentic AI is increasingly deployed in production systems that require coordination across tools, data sources, and decision points.

- Enterprise workflow automation: agents orchestrate multi-step processes across systems such as CRMs, data warehouses, and internal tools

- AI copilots and operational assistants: these systems go beyond chat interfaces by executing tasks directly, such as scheduling meetings, conducting research, or managing internal workflows

- Software development and DevOps: integration with version control systems, CI/CD pipelines, and observability tools helps support end-to-end development workflows, where agents can write code, run tests, debug errors, and deploy applications

- Customer operations: agents manage support pipelines by classifying requests, generating responses, routing tickets, and resolving common issues

- Scientific research and simulation: in domains like drug discovery or materials science, agents design experiments, run simulations, and analyze results, accelerating research cycles

Across these use cases, the defining shift is from assistive AI to operational AI, systems that not only provide insights but take action.

For example, in modern DevOps platforms like Harness, AI-driven systems can monitor deployments, detect failures, trigger rollbacks, and optimize infrastructure usage without manual intervention. These workflows combine real-time decision-making with automated execution, reducing operational overhead and improving system reliability.

Benefits and risks of agentic AI

Like any transformative technology, agentic AI brings both opportunity and responsibility. Its ability to act independently allows organizations to automate complex processes and scale intelligence across systems, but that same autonomy introduces new technical and ethical challenges. Understanding both sides of the equation is key to deploying agentic systems effectively and safely.

Benefits

- Autonomy and scalability: agents can perform complex workflows continuously, freeing humans for higher-value tasks and amplifying the amount of work or size of projects that someone can do

- Speed and efficiency: decisions are made in real time, often faster than human teams can coordinate

- Continuous learning: through feedback loops, agents improve performance without retraining from scratch

- Consistency: agentic systems can reduce variability in repetitive or high-stakes tasks

Risks

- Loss of control: as agents act independently, ensuring oversight and interpretability becomes essential

- Ethical and safety concerns: poorly aligned goals can produce unintended outcomes or biased behavior

- System complexity: multi-agent systems introduce coordination challenges and unpredictability

- Security vulnerabilities: autonomous access to tools and data expands the potential attack surface

Understanding both sides of the equation is key to deploying agentic systems effectively and safely.