AI inference is the process of taking a trained machine learning model and putting it to work on new data. When someone asks “what is inference in AI?” or “what does inference mean?”, the simplest inference definition is that training builds the model’s knowledge, and inference is when that knowledge is applied in the real world. It’s what makes AI more than just a research project; it makes it a working technology that businesses, creators, and everyday people can rely on.

The rise of generative AI has pushed AI inference into the limelight; it’s no longer just AI training that matters. Big foundation models might be trained in large, infrequent cycles and then deployed, but the model is then used millions or billions of times. Think ChatGPT responding to queries, real-time translation tools listening and replying, or image generators spinning up art on demand. Those are all powered by AI inference.

AI inference vs. AI training

To understand AI inference, it helps to distinguish it from training:

- AI training: Models learn patterns from large datasets by adjusting internal parameters

- AI inference: Models use those learned parameters to process new data and generate outputs

Training is typically performed offline on large compute clusters, while inference runs in production environments and must respond in real time. As a result, inference systems are optimized for low latency, consistent performance, and high throughput at scale.

Both phases are essential, but they place different demands on infrastructure and system design.

How AI inference works

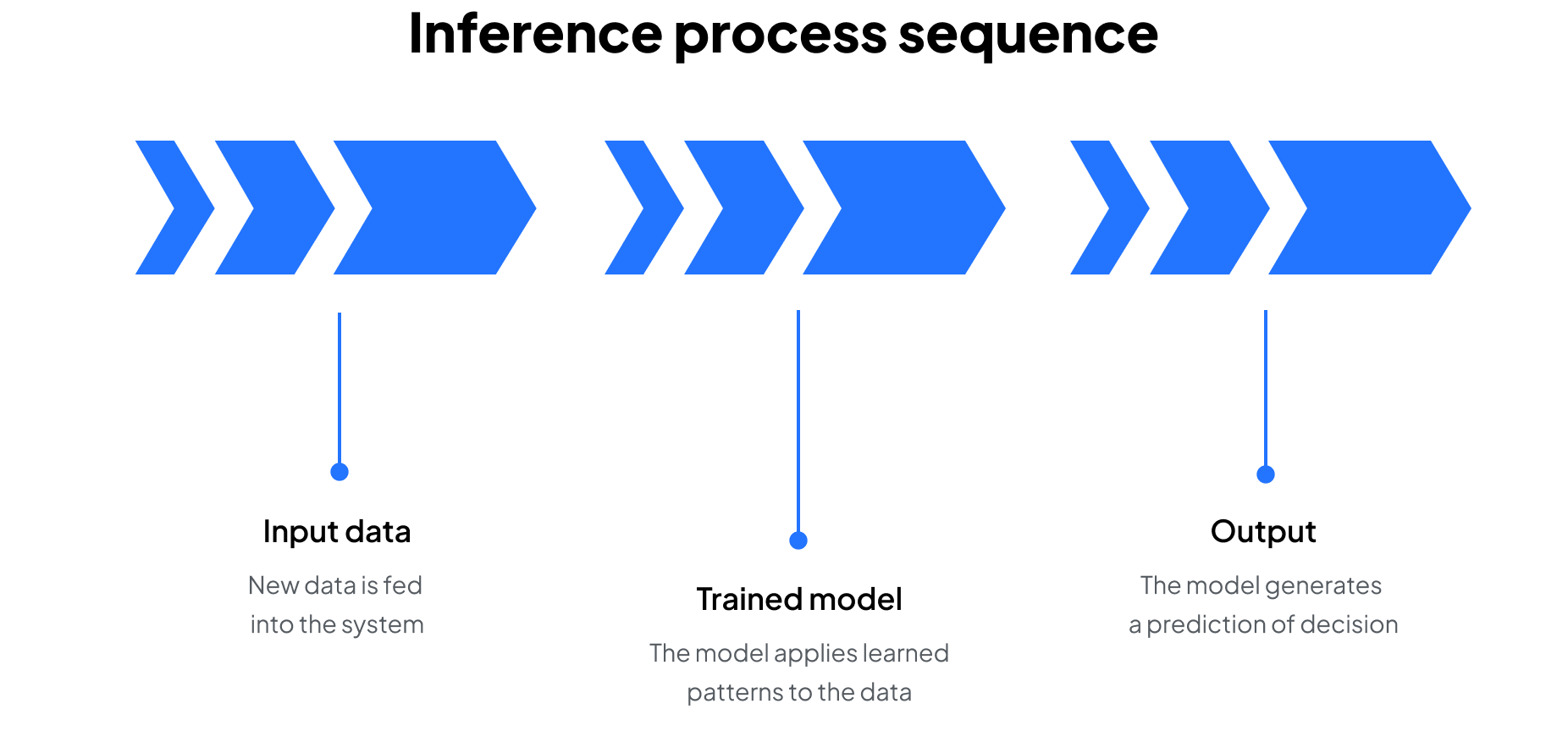

At a high level, inference happens when a trained model receives new input data and generates an output. The model does not learn during this phase. It applies patterns learned during training to make a prediction or decision.

Inference typically follows three steps:

- Input preparation: incoming data is formatted into a structure the model can process

- Model execution: the data passes through the model, where learned patterns are applied

- Output generation: the model returns a result, such as a prediction, classification, or score

At a high level, inference happens when a trained model receives new input data and generates an output. The model does not learn during this phase. It applies patterns learned during training to make a prediction or decision.

While this process is straightforward, running it at scale introduces complexity. A single prediction is relatively lightweight, but production systems must handle large volumes of requests in real time.

To do this effectively, inference systems are designed to balance:

- Low latency so responses are delivered quickly

- High throughput to handle many requests simultaneously

- Cost efficiency to keep operations scalable as usage grows

This is where infrastructure becomes critical. GPUs and other accelerators handle the underlying compute, while orchestration systems distribute workloads to maintain performance and reliability.

In short, inference is the operational phase of AI, where models turn input data into outputs in real time.

Essential infrastructure components of inference

Running inference at scale isn’t just about the model; it depends on the underlying infrastructure that makes predictions fast, reliable, and affordable. Several components work together to keep inference pipelines efficient and production-ready.

Together, these components form the backbone of modern inference systems. They set the stage for the challenges that follow by balancing speed, scale, and cost in real-world deployments.

Common challenges with AI inference

Even though AI inference is lighter than AI training, it comes with its own hurdles:

Latency vs. accuracy trade-offs

Running models in lower precision (like INT8 instead of FP32) speeds up inference and boosts throughput, but it can also chip away at accuracy. The trick is finding the balance. Ultra-low latency is critical in areas like ad placement or fraud detection, while health care and safety applications often favor precision.

Solution: Techniques like quantization and mixed precision keep latency low without sacrificing too much accuracy.

Hardware bottlenecks

CPUs can run inference, but they struggle to deliver real-time performance at scale. That’s why GPUs and purpose-built accelerators are often essential. Still, even accelerators can hit limits when model sizes balloon or memory bandwidth becomes a choke point.

Solution: Leverage high-memory GPUs or distributed inference strategies to avoid bottlenecks.

Scaling to millions of queries

Serving one request is easy; serving millions simultaneously is where orchestration, autoscaling, and load balancing come in. Without the right infrastructure, a sudden spike in traffic can swamp even the most powerful system. Cold start latency—when new instances spin up to handle demand—can also introduce delays if not properly managed.

Solution: Container orchestration platforms such as Kubernetes, along with caching, batching, and pre-warmed instances, help systems stay responsive under load.

Cost efficiency

Inference may be lighter than training, but it’s the phase that runs nonstop. At scale, costs pile up quickly. Optimizations such as batching, caching, and quantization are what make long-term AI deployments sustainable.

Solution: Right-size hardware for workloads and apply model compression or distillation to reduce compute demand.

Real-world use cases

AI inference shows up everywhere in modern applications, embedded in the tools and services people interact with every day. From life-saving medical scans to the playlist your app queues up, AI inference is the part of AI that shows up in the moment of decision. Some common inference examples include:

Healthcare

Models trained on millions of images can analyze X-rays, MRIs, or CT scans to detect early signs of disease. AI inference enables real-time support for radiologists, helping to speed up diagnoses and improve patient outcomes. In some cases, AI systems can even prioritize urgent scans, so doctors review the most critical cases first.

Finance

AI inference powers fraud detection systems that evaluate transactions in milliseconds. Every credit card swipe or online payment is compared against patterns of legitimate and fraudulent behavior. By running AI inference at scale, banks can block suspicious activity instantly without slowing down everyday purchases.

Retail

Recommendation engines rely on AI inference to suggest products, promotions, or content that align with a customer’s interests. Whether it’s “customers also bought” lists or personalized discounts, AI inference makes shopping experiences feel more relevant, which often drives significant revenue uplift.

Autonomous systems

Self-driving cars, drones, and robots depend on AI inference to interpret their environment in real time. Identifying stop signs, tracking pedestrians, or navigating around obstacles requires low-latency predictions to keep systems safe and responsive.

Creative industries

Generative AI models use AI inference to turn text prompts into images, videos, or music. Tools like text-to-image generation or video synthesis depend on inference speed to produce high-quality creative outputs that feel fast to the end user.

The common thread? Each use case demands that AI inference is fast and delivers reliable predictions at scale. Whether the stakes are medical safety, financial security, or customer engagement, AI inference is what makes AI practical and impactful in real-world settings.